OpenCLLink Programming

Programming OpenCL in the Wolfram Language is simple since the user need not write C wrapper code—which can be quite verbose, difficult to understand, and hard to debug. Using OpenCLLink also guarantees compatibility as new versions of the standard are released.

In this section a brief introduction is given to OpenCL programming. The section uses OpenCLFunctionLoad, which allows users to load OpenCL code and use it from within the Wolfram Language.

| OpenCLFunctionLoad | load an OpenCL function from source into the Wolfram Language |

OpenCL programming in the Wolfram Language.

Users are advised to read the much more detailed tutorial "CUDALink Programming".

OpenCL Programming

An OpenCL program is a small piece of code that performs a computation on each element of an input list. This first program will add 2 to each element.

__kernel void addTwo_kernel(__global mint * arry, mint len) {

int index = get_global_id(0);

if (index >= len) return;

arry[index] += 2;

}

The following is the decomposition of the above program into sections.

_kernel void addTwo_kernel (_global int ⋆ arry, int len) {

The _kernel construct declares the function to be run on the OpenCL GPU. The rest are function arguments with pointers having the _global prefix.

int index = get_global_id (0);

This gets the index value of the thread executing the function. The index values range from 0 to the number of threads launched.

This makes sure that the program does not write to memory beyond the length of the input array. Since the number of threads launched is in multiples of the block size, this conditional statement is needed if the size of the input array is not a multiple of the block size.

This adds two to each element.

Loading Program into the Wolfram Language

Once the program is written, it can be loaded into the Wolfram Language using OpenCLLink. This is done using OpenCLFunctionLoad.

Load the OpenCLLink application.

First, assign the program to a string.

This loads the function. The arguments to OpenCLFunctionLoad are the source code, the name of the function to load, the function signature, and the block dimension.

The result is the set of output list elements.

Porting CUDA to OpenCL

Since OpenCLLink handles the C wrapper code required in OpenCL programming, allowing the user to concentrate on the OpenCL kernel code, this is the only code needed to be ported from CUDA.

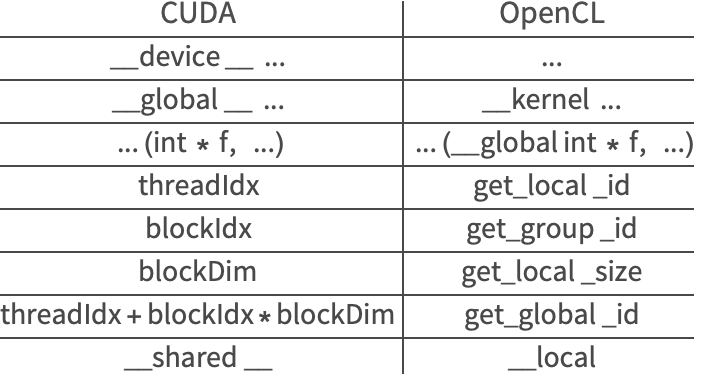

In terms of OpenCL program porting, there are one-to-one function renames between CUDA and OpenCL. The following table gives the correspondence.

In this section, use the above table to port the following CUDA code to OpenCL.

__global__ void myKernel(mint * global0Id, mint * global1Id, mint width, mint height) {

int xIndex = threadIdx.x + blockDim.x * blockIdx.x;

int yIndex = threadIdx.y + blockDim.y * blockIdx.y;

int index = xIndex + yIndex*width;

if (xIndex < width && yIndex < height) {

global0Id[index] = threadIdx.x;

global1Id[index] = threadIdx.y;

}

}

The following is the translation of the CUDA code into OpenCL.

The following was changed when porting:

In terms of loading the code, the only change that is needed is to replace CUDAFunctionLoad with OpenCLFunctionLoad.

Terminology

Users should note that there are some differences in terminology between CUDA and OpenCL. In the Wolfram Language, the best description of both is combined.

The following table gives the translation in terminology:

| CUDA | OpenCL | |

| streaming multiprocessor | device | |

| multiprocessor | compute unit | |

| global memory | global memory | |

| shared memory | local memory | |

| local memory | private memory | |

| kernel | program | |

| block | work group | |

| thread | work item |

Memory

The behavior of the memory manager is the same between CUDALink and OpenCLLink. The memory manager is discussed in depth in the CUDALink Memory Guide.

Since memory is bound to one link, CUDALink and OpenCLLink memories are not interchangeable.