"JarvisPatrick" (Machine Learning Method)

- Method for FindClusters, ClusterClassify and ClusteringComponents.

- Partitions data into clusters of similar elements using Jarvis–Patrick clustering.

Details & Suboptions

- "JarvisPatrick" is a neighbor-based clustering method. "JarvisPatrick" works for arbitrary cluster shapes and sizes. However, it is parameter sensitive, and can fail when clusters have different densities or are loosely connected.

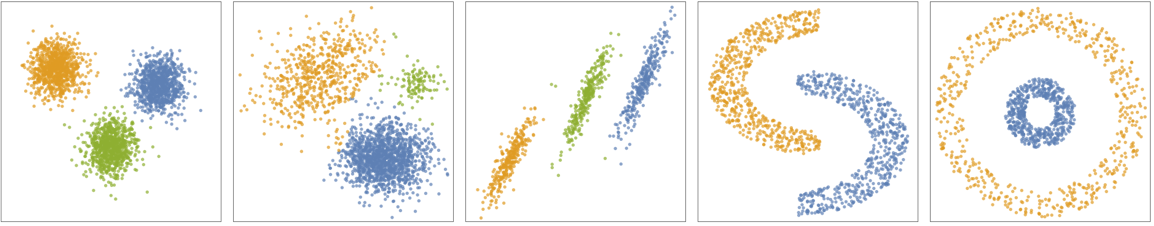

- The following plots show the results of the "JarvisPatrick" method applied to toy datasets:

-

- The algorithm finds clusters based on the similarity of nearest neighbors of data points, and uses "shared nearest neighbors" as a measure of similarity between points.

- In "JarvisPatrick", neighbors are defined by points within a ball of ϵ radius. Each couple of neighbors that share at least p neighbors belong to the same cluster.

- The following suboptions can be given:

-

"NeighborhoodRadius" Automatic radius ϵ "SharedNeighborsNumber" Automatic minimum number of shared neighbors p

Examples

open all close allBasic Examples (2)

Options (5)

DistanceFunction (1)

"NeighborhoodRadius" (2)

Find clusters using "JarvisPatrick":

Find clusters by specifying the "NeighborhoodRadius" suboption:

Define a set of two-dimensional data points, characterized by four somewhat nebulous clusters:

Plot different clusterings of the data using the "JarvisPatrick" method by varying the "NeighborhoodRadius":

Applications (1)

Create and visualize noisy 2D moon-shaped training and test datasets:

Train various ClassifierFunctions by varying the "NeighborhoodRadius" using the "JarvisPatrick" method:

Find and visualize different clusterings of the test set, given small changes in "NeighborhoodRadius":